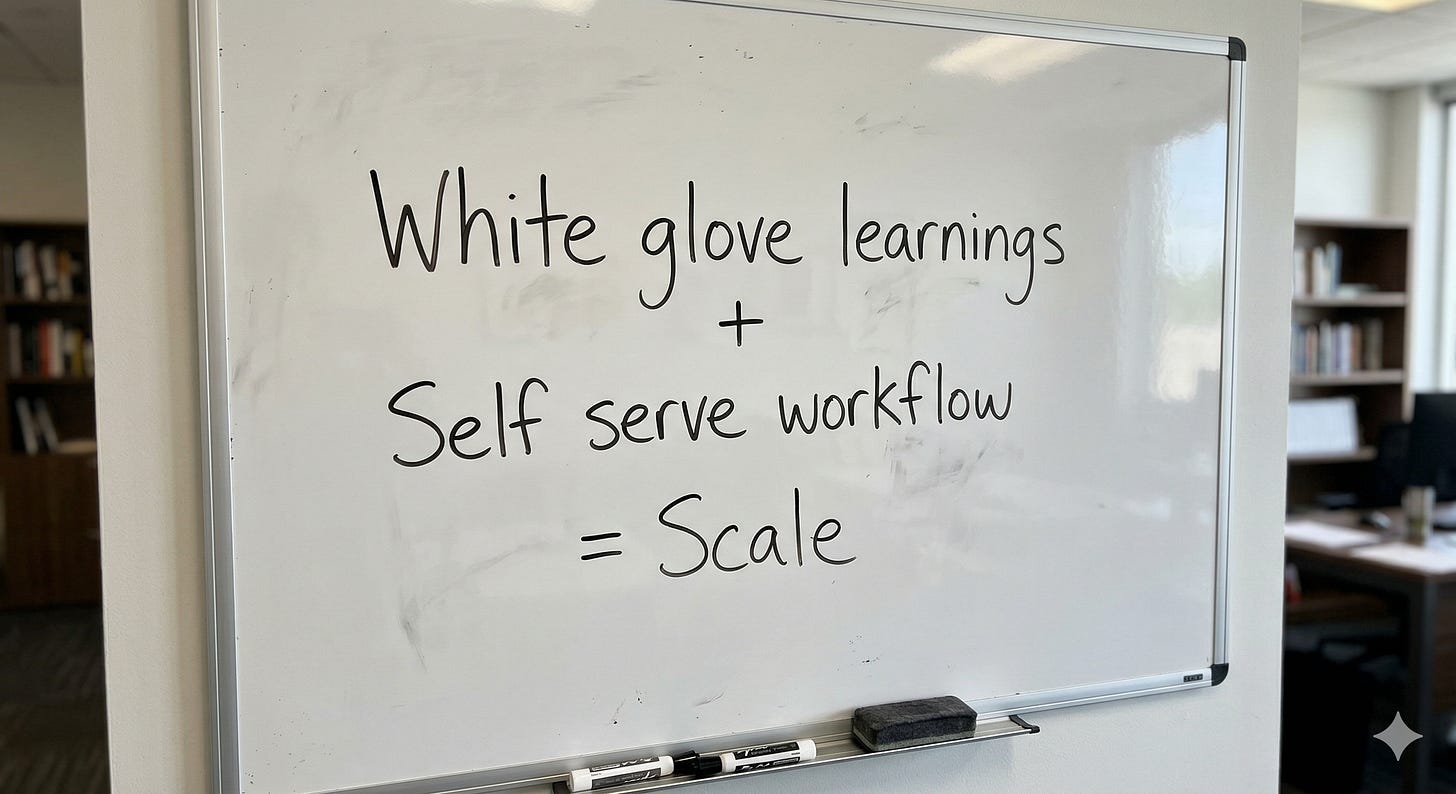

Turning a White-Glove Process Into a Self-Serve Workflow

Denise is CEO and co-founder of Variata, an AI-powered testing platform. Her product lets teams validate their websites and apps by describing what they want tested instead of writing brittle, step-by-step automation scripts. Variata AI figures out how to navigate the site, run the flows, and report what broke.

It works. Enterprise customers love it. But there’s a catch: every new customer goes through a white-glove onboarding where Denise’s team manually authors the test scenarios alongside them. They identify the highest-value flows, tune the inputs to the right level of specificity, and build a working setup that would’ve taken weeks for in-house QA teams to develop in just a day’s time.

That process produces great outcomes and teams are already saving lots of time with Variata. But now Denise is thinking about scale.

So she set out to build the self-serve version including an authoring tool that would help users create their own test scenarios without her team in the room. She’s done discovery across paying customers, free trial users, and casual evaluators. She’s identified a spectrum of user inputs ranging from “just test my site” to granular click-by-click scripts. She’s built three prototype classifiers to guide users toward the sweet spot.

These are all the pieces. The question is how they fit together.

Denise and I help each other as founders, and this month we used her self-service challenge as the topic of our conversation. It gave me a chance to work through a real product planning scenario that will help us build Stoa (our conversational planning tool) and it gave her an outside perspective. This session led us past some strong product principles so I decided to write it up and share it.

The Spectrum Problem

Here’s the tension Denise was staring at. Variata’s AI works best when it gets input at a middle level of abstraction — milestones and expected outcomes, not pixel-level instructions. “Verify the sign-up flow works and the user lands on the dashboard” is better than “click the email field, type test@gmail.com, click the password field, type abc123, click Submit.” The specific version latches onto details that change constantly. The milestone version is durable.

But users don’t naturally land in that middle zone. QA engineers tend to over-specify. Product managers and executives tend to under-specify. And the “just test my site” crowd gives almost nothing to work with.

So the question Denise was trying to answer: how do you build an AI-assisted authoring experience that nudges users toward the level of detail that actually produces reliable tests?

She’d prototyped three approaches. (1) A binary gate that gives a simple yes/no depending on whether your test prompt is sufficient. (2) A gap coach that shows you specifically what’s missing. And (3) a clarifying Q&A coach that asks questions until it has enough to work with.

Before evaluating any of them, though, there’s a more fundamental question we decided to tackle.

Whose Problem Is This?

It’s easy to describe this challenge in system terms: how do we ensure users provide input that maximizes Variata’s success rate? That framing is accurate. It’s also a trap, because it centers the product’s needs rather than the user’s.

Flip it around. What’s the user actually trying to do?

Denise described a Product Leader she’d spoken with- someone who manually runs twenty user flows every morning. An hour of clicking through their own site with a coffee, testing that the promo codes work, the checkout completes, the filters behave. Not because it’s in their job description. Because they feel personally responsible for their site.

That person’s problem isn’t “I need to author test scenarios at the right level of abstraction for an AI system.” Their problem is: I have a process that works, I want it to keep working, and I’d like my morning back.

That reframe matters because it changes what success looks like. The authoring tool isn’t asking users to learn a new skill. It’s asking them to hand over something they already do and trust that it’ll be done right.

Reframe the problem from the user’s side before designing solutions. “How do we get better input for our system” and “how do I get my morning back” lead to very different products.

Who Exactly Are You Building For?

Denise had mapped out several personas: QA testers, product managers, developers, enterprise buyers, self-serve evaluators. She’d segmented by release cadence and personal risk. The users who keep coming back are the ones who have to sign off on revenue-driving releases at least monthly.

But “people who sign off on releases” is still a broad group. And a self-serve product can only have one front door.

The narrowing question: who, specifically, is going to try this tool on their own, fall in love with it, and then fight to get it adopted inside their company?

Not the QA engineer; at least not at first. Some will resist a tool that automates their core job. The person who will champion Variata from the bottom up is the product leader or VP who does QA out of intrinsic motivation. They’re doing it because nobody else will, they care about quality, and they’d happily hand it off to a system they trust.

That person also happens to have the organizational leverage to push deals forward from below while the Variata sales team works with executives from above.

Pick the champion, not the job title. The user most likely to adopt and evangelize your self-serve product isn’t always the one whose role most obviously matches your category. Look for intrinsic motivation plus organizational influence.

What Already Works

Here’s where the conversation got interesting. Before evaluating the three prototypes, it’s worth asking: what happens in the manual version that works so well?

When Denise’s team onboards a new customer by hand, what does the session actually look like? What’s on the screen? Where does the input come from?

Her answer was surprising. Users don’t typically pull up their live product and walk through it together. Instead, they show up with requirements documents from Jira tickets, specs, runbooks, and email attachments describing features they need tested. Sometimes the features don’t even exist in a live environment yet. The user is working from a written description of something they didn’t build and may never have seen running.

So Denise’s team takes that document and, sitting alongside the user, translates it into testable scenarios at the right level of abstraction. They author a few together so the user can see the pattern. Then they send the user off to do the rest as homework.

That’s the workflow. And it reframes the entire product challenge into one sentence: help people turn their requirements documents into testable scenarios.

Not “build a chatbot that asks smart questions.” Not “create a test recorder that watches you click.” Just: take the artifact the user already has and transform it into something Variata can run.

Study your manual process before automating it. The best self-serve products don’t invent new workflows. They bottle the proven ones. If your team already knows what works in the white-glove version, the product’s job is to encode that, not reimagine it from scratch.

The Case Against Questions

Back to the three prototypes: (1) a pass/fail binary gate on your testing inputs, (2) a coach that shows you the gaps between your input and expected structure, and (3) a Q&A workflow. With the problem compressed to “requirements in, testable scenarios out,” each approach looks different.

The binary gate is clearly too blunt. Telling someone “not enough detail, try again” when you have an LLM that can reason about exactly what’s missing is, as Denise put it, “a little bit lazy.”

The clarifying Q&A flow is more sophisticated. It mimics the experience of Claude Code or Codex- thinking, then surfacing questions like “What’s the expected outcome of a successful sign-up?” with selectable options. It feels smart.

But there’s a structural problem with questions: how do you know when to stop asking them? There’s a fine line between “your questions are helpful” and “your questions are annoying,” and that line moves depending on the user’s patience and context. Ask too few and you don’t have enough to work with. Ask too many and the user gives up.

More importantly, questions don’t teach. If the AI asks clarifying questions today, it’ll have to ask clarifying questions again tomorrow. The user never learns what a good testable scenario looks like. Instead they just get walked through one instance.

The gap coaching approach does something different. It shows the user a target that lets them build a mental model; here’s what a complete, well-formed scenario looks like. This, along with highlights of the specific gaps in what they provided can be powerful. Instead of an interrogation, it’s a progress bar toward a visible standard.

That means the user learns the shape of a good scenario. Next time through, they need less help. The product is building user capability, not user dependency.

Show what good looks like instead of interrogating toward it. A coaching UI that reveals the target teaches users to self-serve over time. An open-ended Q&A creates a recurring dependency on the system. The best onboarding doesn’t just get users through — it makes them better.

The Bigger Pattern

Denise walked into this conversation with a clear picture of Variata’s overall story and ROI journey. All we did together was zoom into one specific moment in that journey and get concrete about who’s there, what they’re holding, and what they need next.

The pieces were all present in her research. The Product Leader with the morning coffee ritual. The requirements docs that users show up with. The white-glove process that already works. The insight that coaching beats interrogation.

Sometimes product work isn’t about generating new ideas. It’s about compressing what you already know until the next move becomes obvious.

Want a workspace to have your own product clarity sessions? Try https://somehow.sh